Beyond Broken Links – Some Alternative Uses For Screaming Frog

Secondly, sorry if you were expecting the second part of my Local Domains Strategy post. That’ll be coming soon.

Site crawlers are an essential part of any SEO arsenal. You need to be able to pinpoint broken links, 302 redirects and other undesirable elements on your site and these are the quickest, easiest way to find them. The difference between most of them and the Screaming Frog SEO Spider is how much else it can do. The Froggy can find pretty much anything on your site or in your backlink profile if you set it up right. Today, I’m going to talk through a few alternative ways you can use it.

Check for Incorrectly Configured Canonical Tags

Finding Duplicated or Over-Limit Meta Titles or Descriptions

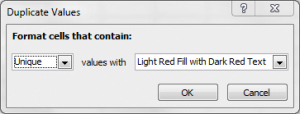

Again, another easy one that a lot of people miss. Once your crawl’s done, go to the Page Titles or Descriptions tab, export it to Excel and do the “Highlight Duplicates” thing from the above point. From here, you can either do separate exports or – my preferred approach – do some further highlighting in Excel. Back to the Conditional Formatting section and you can highlight the ones that are above and below the optimal length for either titles or descriptions.

If you’d like more information about the optimal meta title and description lengths for different search engines, you can find it in my unnecessarily long post from a couple of months back.

Checking PPC Display URLs

We’ve got a lot of PPC bods at work. They’re very good at what they do, and they work with some amazing brands, several of whom I’m lucky enough to work on too. However, when you work on such large sites and accounts, putting together tons of display URL’s, you need a quick way to check for errors. That’s where Screaming Frog can help out.

Before the campaigns go live, get the team to send you their display URL’s as a txt or csv file. Run them as a list and be sure to go in to the Configuration section and uncheck Check External Links. Any redirects or 404’s, highlight to the team and that’s a nice, clean campaign for them.

Checking Tracking Codes

![]() This one’s been covered a lot since the tool came out, but it’s mostly focused on Google Analytics. Not that Google Analytics isn’t essential, but you can also use Screaming Frog to check for any other tracking platform that puts a unique identification code into the page source, including Doubleclick, Infinity Call Tracking, Clicky and Omniture.

This one’s been covered a lot since the tool came out, but it’s mostly focused on Google Analytics. Not that Google Analytics isn’t essential, but you can also use Screaming Frog to check for any other tracking platform that puts a unique identification code into the page source, including Doubleclick, Infinity Call Tracking, Clicky and Omniture.

Go into your site’s source, find your tracking code, copy the unique identification ID and then go to Custom inside Screaming Frog. From here, check one filter for Includes and one for Does Not Include and paste your ID code into both boxes. Now when you’ve run your crawl, go to the Custom tab and export Filter 1 and Filter 2. If pages that should have the code in are coming up in filter 2, have a word with the dev team.

Broken Link Prospecting

This one’s my new favourite. You need a couple of other toys to do it properly, but they’re well worth the investment.

Broken links are one of the main “in’s” for link builders, but it’s one of those techniques that can be tough to scale. Fortunately though, thanks to Screaming Frog, there’s a solution.

Firstly, you’re going to need a link prospector. I use quite a few, including the Citation Labs Link Prospector, but whatever you use, as long as you can get a big list of prospects into a spreadsheet, you should be good to go.

Run your prospecting report using your platform of choice, but be sure to be as specific as possible. You’re trying to scale your broken link building, but you don’t want such a huge list of pages to test that your crawl’s running for a month – if you think about how many links are on the average webpage, you’ll see why. All the prospectors I mentioned above let you drill down your exports a fair way, so if you’ve done that, we should be good to go.

Most prospectors let you export your opportunities into csv. Do that. Now paste the paths into a new csv or text file – these are the pages we want to test.

Set Screaming Frog to the List mode, but rather than the usual list crawl, go into Configuration, check the External Links and – this is the important part – set the level to one. It’ll then crawl outbound links and, hopefully, give you a few opportunities to hit for links on very relevant pages. I’ve used this one quite a bit recently and had some good success, but only if the prospector is set properly. There’s a great post here offering some excellent settings for the Citation Labs Link Prospector, which is well worth a read.

And We’re Done

There’s so much that you can do with this tool that I haven’t even scratched the surface. I’d love to hear some other ways that you guys are using it, so leave me a comment if there are any I’ve missed. Happy Frogging.